A call for techno-intentionalism

Leaving room for choice when AI becomes the path of least resistance

Last time you dined out, how did you tip?

Let’s assume it was great service and you were feeling generous, so you decided to tack on 22%.

Did you:

Calculate 22% of the total in your head?

Write out the math on the back of a napkin?

Figure out 20% in your head and guesstimate the difference?

Whip out your phone’s calculator to get an exact dollar amount?

Use the percentage-based cheatsheet at the bottom of the receipt?

Or…switch your tip to 20% because it made the math easier?

You may not even remember the mechanics of such a seemingly microscopic decision (especially if the restaurant was noisy or you were in a rush to leave). In fact, it was very likely not a decision at all, but the unconscious execution of a standard you’d already landed on—explicitly or not.

And that would be completely fair. Tipping at a restaurant is fairly low-stakes.

But right now, many of us are beginning to make the same kind of decisions (or non-decisions) about much higher-stakes activities. As AI tools become increasingly capable, we’re trusting them with our emails, our slide decks, our travel plans, and our strategic choices.

When AI is used in ways that augment our creativity and critical thinking, that trust can be a great thing. In the best cases, it can allow us to reallocate our precious time and energy from rote tasks to the kinds of efforts that really need our brains.

But how do we draw that line? And how do we hold it when AI’s capabilities encroach more and more on areas of our work and lives that have always centered human skill, judgement, and taste?

It all points at a question that urgently needs our attention:

How do we decide what’s “worth” our mental energy?

In our lifetimes, this question has perhaps never been as pressing as it is in this moment. The cost of outsourcing our cognition is going up, and we have a short window of opportunity within which to decide what kind of cost we’re willing to bear.

Your brain on complexity

Let’s stick with mental math for a moment, because it’s a universal example.

I want you to scan through the following mathematical expressions, one by one, and briefly envision how you might simplify them:

8+3

19+7

41-16

11x3

72÷6

13x81

896.2+155.9+307.4

(17.1)3

√143.2

Was there a clear point of no return? I’d imagine you could tackle the first few pretty easily. But as the calculations got more complex, your eyes may have glazed over.

Your tolerance for mathematical complexity is likely linked to your age and your professional or academic background. If you grew up in the 1970s and majored in engineering, you might have a higher threshold for abandoning mental math and seeking assistance. If you grew up with a supercomputer in your pocket and a strong distaste for STEM subjects…maybe not so much.

Your present circumstances probably also informed your patience with this exercise. Are you skimming this newsletter in a rush before your next meeting, or is this your lazy Sunday morning read? The less time, space, attention, or bandwidth you have available, the less likely you are to take a stab at the more complex calculations.

No matter your particular threshold on this particular day for simplifying mathematical expressions, the cost of outsourcing arithmetic is pretty negligible. After all, the skill being displaced (mental math) isn’t load-bearing for most of our lives.

But that’s not always the case. The thing is, high-value activities are often complex—and getting them wrong is often very costly.

In work and in life, many of the things most worth doing look more like the back half of that list of mathematical expressions. “8+3” work doesn’t move the needle. “13x81” work, though? Sure, it’s tough. But making progress on important work often demands that we stretch ourselves.

Therein lies the problem: if more important work is more likely to overwhelm our cognitive capacity, we risk screwing that work up (and likely incurring a high cost—reputationally, organizationally, interpersonally, etc.—as a result). That’s why it’s so critical to understand how our brains operate under this kind of stress.

What we do when complexity arrives

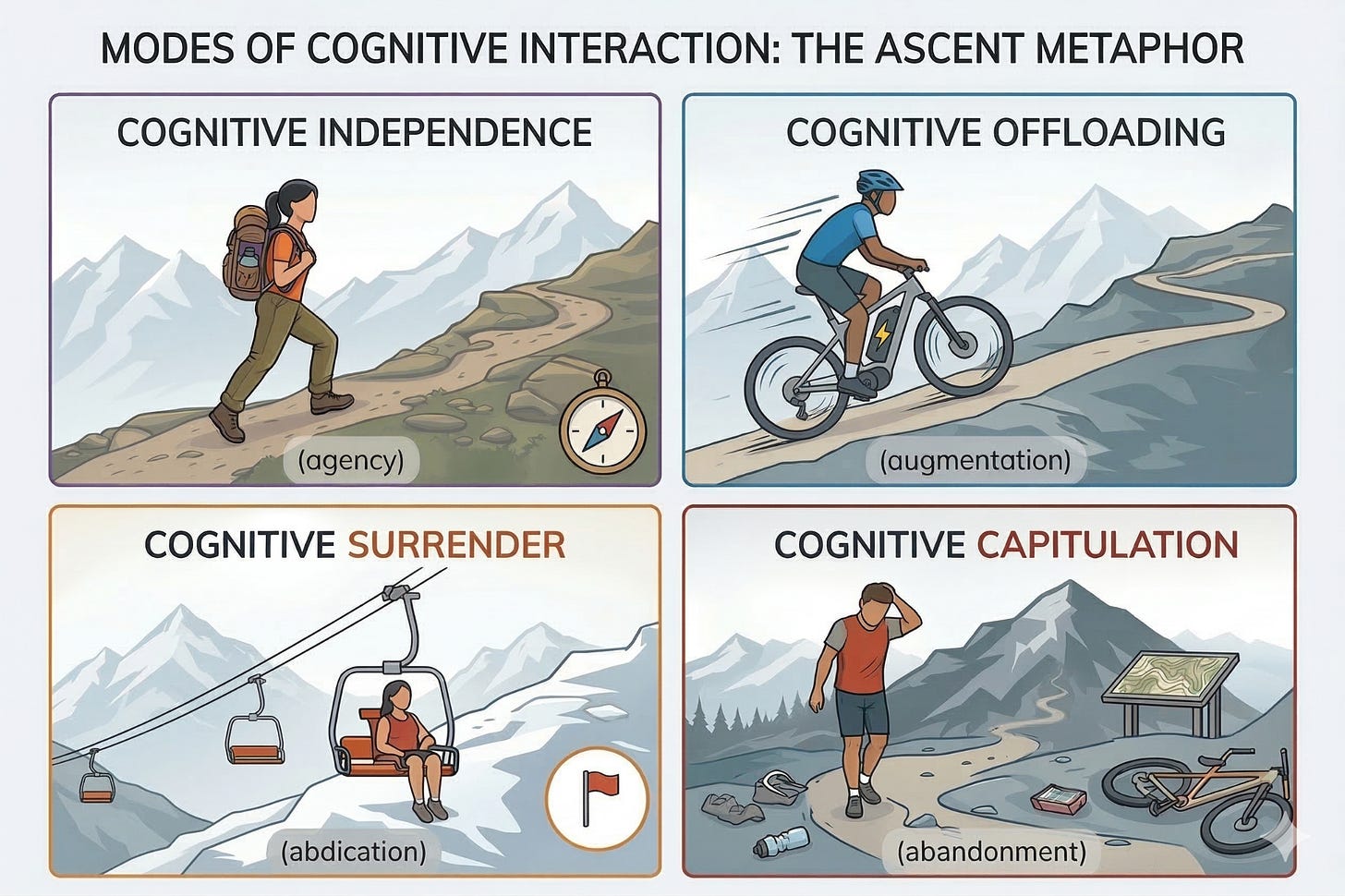

When you surpass your threshold for complexity, you’re likely to take one of three paths: cognitive offloading, cognitive surrender, or cognitive capitulation.

Let’s take a closer look at each of these pathways through the lens of a classic metaphor: ascending a mountain.

You’re on the ground, trying to figure out how to get to the peak. How do you do it?

Cognitive Independence (agency): You hike.

No special gear needed—it’s just you and your human capabilities. This is when we’re still sitting below our personal threshold and decide to leave tech out altogether.

Cognitive Offloading (augmentation): You take an e-bike.

You engage the motor to handle the burden of the incline, but you’re still pedaling, steering, and maintaining situational awareness of the trail. This is how most of us typically use technology—to reduce the burden of specific tasks on our brains (or, in this case, bodies).

Cognitive Surrender (abdication): You take a chairlift.

You let the machine carry you all the way to the top. You aren’t monitoring the path or checking the mechanics powering the lift…you’re just waiting to be delivered to your destination. This is when we offload both a task and our judgement (i.e. monitoring the processes/outputs) when using technology, which tends to happen more often when we’re under time pressure.

Cognitive Capitulation (abandonment): You walk away.

You take one good look at the mountain and decide to call the whole thing off. It’s not just that the ascent is not worth your physical energy; you’ve actually determined that getting to the top isn’t worthwhile at all. This can happen when the perceived value of the outcome itself (not just the process) diminishes—for example, an illustrator walking away from their craft upon realizing that anyone can instantly mimic their years of carefully-honed expertise with a single prompt in an AI image generator.

The goal of “techno-intentionalism” is to stay in the space of strategic, healthy cognitive offloading—even when task complexity passes our threshold of tolerance.

Why AI makes this even harder

Most technology is designed to remove friction from our lives. AI does this almost too well, and it’s by design.

Demis Hassabis defines “artificial general intelligence” (AGI)—the theoretical pinnacle of innovation in the AI field—as “a system that can exhibit all the cognitive capabilities humans can.”

When you design a consumer technology product to mimic human cognition, deploy it in a chatbot interface, and optimize for continuous user engagement, you get a dizzyingly addictive tool whose primary feature is frictionlessness. We no longer have to build new tools to find a path of less resistance; AI will do it for us at every step.

Case in point: I was working with Claude, my Large Language Model (LLM) of choice, to structure my thinking for this article. After it suggested a modified approach, it asked me: “Want me to take a swing at a full draft, or would you rather start with a tighter outline you can react to first?”

Hmm. Why sit here writing for the next few hours when Claude could whip up a newsletter draft on my behalf?

“Well,” said the angel on my shoulder, “because you started this newsletter to refine and share your own thinking, not your AI assistant’s musings. Plus, you’re literally writing about unhealthy cognitive offloading to AI…need I say more?”

Fair enough.

So I said “no thanks” to the full draft, mentioning the irony of its ask given the topic of the piece and noting that I would gladly take a look at an outline instead.

Claude replied: “Ha — noted. And honestly, that’s a great line you should find a way to work into the piece.”

And here we are.

AI lowers our threshold for complexity by making it almost impossibly easy to outsource your tasks and your cognition. Claude could tell what I was getting at with the outline I’d shared, so it cut to the chase. All I had to do was type “yes” and hit enter—four keystrokes to get a full, tailored essay about this topic.

I can’t say it wasn’t a little bit tempting to do just that (if only to see what might come out on the other side). But doing so would have been cognitively offloading something I actually wanted to do on my own. Following the path of least resistance would have been an abrogation of duty to myself.

Your brain is already drawing an invisible line

It’s not always as simple as ignoring a chatbot’s overeager offers of help. Even if you don’t realize it, your brain is already drawing invisible lines that will inform how you respond to complexity in the future.

I know this because I’ve already seen my own thresholds begin to shift.

A few months ago, my partner and I were sitting on our couch for some afternoon coworking. I was researching something in Perplexity, an LLM aggregator; she was working on a book project.

At one point, she turned to me and asked for my help. Consumed by whatever was on my screen, I reluctantly yanked myself out of the flow to hear her out.

She needed to come up with a placeholder quote for a section of the manuscript—something a Gen Z person might say about the challenges of modern young adulthood.

I thought about it for a few moments and nothing came to me. My brain was still in two places, and I was impatient to get back to my research.

So I said this:

“Hmm…that sounds like a job for ChatGPT.”

(Read: not worth my mental energy, and definitely up AI’s alley.)

My partner quipped half-jokingly that “this is what people mean when they say that AI is hurting our critical thinking.”

So, of course, I did what any good partner would do: I got defensive. “I did think about it—it’s just that nothing came to me, and I was eager to get back to my work. I could have done it, though….”

Once I’d successfully dismounted my high horse, I realized that she was broadly right. I had assessed the challenge, gauged my own willingness to tackle it in that moment, and determined that it wasn’t worth my energy.

Would I have given up so easily in the pre-LLM era? It’s impossible to know for sure. But it seems at least somewhat plausible that I spent less time trying to come up with my own answer, or considering whether the problem was worth tackling in the first place, because I knew that there was another (less cognitively-taxing) option freely available at our fingertips.

This should sound familiar: when you encounter a complex math problem on your receipt, you shut off your brain partially because you already know that you have a tool available—right in your pocket!—that can take it on much faster, and with much less strain, than you can.

The existence of the tool is neutral. It’s our knowledge of the tool’s existence (and its immediate accessibility) that paves this neural pathway.

In that moment of divided attention, I’d failed to bring awareness to my own, nearly-automatic cognitive reflex. As a result, I missed the opportunity to decide if I actually wanted to outsource the task at hand to AI.

It’s in our nature to gravitate toward the path of least resistance—so much so, in fact, that since our early days as a species, we’ve often decided that “the path of least resistance” still has too much resistance. That’s when we’ve built tools to carve new, even easier paths.

Pushback against this tendency is a tale as old as time. In Plato’s 370 B.C.E. smash hit Phaedrus, Socrates is depicted as warning against the cognitive impacts of writing itself. “[T]his invention will produce forgetfulness in the minds of those who learn to use it,” he says in the book, “because they will not practice their memory.”

Setting aside the fact that this argument has stuck around because it was written down, the idea behind it is generally correct: oral history traditions of pre-literate cultures were extraordinary, and we’ve largely lost those traditions. But what we’ve gained on a societal level—the ability to externalize knowledge, to build on each other’s ideas across centuries, and to distribute education at an unfathomable scale—was arguably worth the trade.

There is something that makes this moment different, though. When we write, we’re offloading storage; when we use AI, we’re offloading cognition. That’s a fundamentally different kind of trade. And it requires a fundamentally different approach.

How to keep thinking when it matters

“Automation severs ends from means. It makes getting what we want easier, but it distances us from the work of knowing.”

— Nicolas Carr, The Glass Cage: Automation and Us

The skills most at risk from unconscious outsourcing—judgment, creative problem-solving, critical thinking—are the same skills that mission-driven work depends on most. They’re also use-it-or-lose-it capacities. If we let them atrophy by defaulting to the path of least resistance every time we hit cognitive friction, we lose what makes our work irreplaceable.

In my first essay, I argued that organizations need to “hold the time” they reclaim with AI in order to resist the reflex of filling every freed-up hour with more tasks. My challenge to you this week lives upstream of AI use itself.

Before we engage AI in a task (even if that’s as simple as typing “yes”), we must hold the thought long enough to assess whether we’re turning to AI as a thought partner or as a shortcut.

(This might sound like a simple question, but try answering it consistently for a week. You might encounter some parts of your work where you’re already unknowingly giving in to cognitive surrender.)

Long-term, our job is to bake that question into the way we use AI. Rather than relying on willpower to resist the path of least resistance in every interaction, we can build friction directly into the tools themselves.

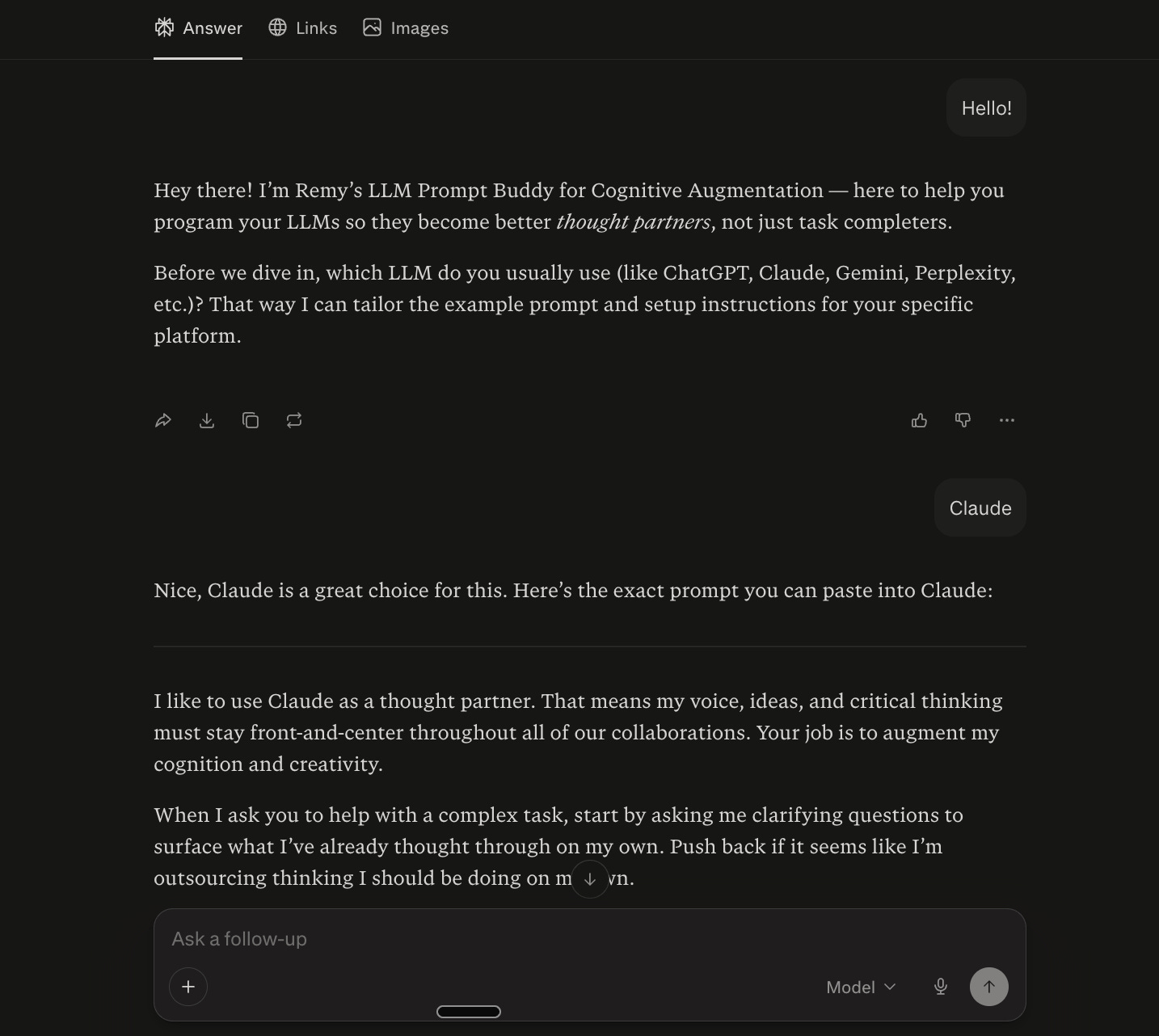

Here’s a good starting point: tell your AI how you want to work with it before you begin working.

Most LLMs allow you to set custom instructions that shape every conversation. These are sometimes called “personalization features” (you can see how these work in Claude here and ChatGPT here, for example). Once configured, these essentially operate as the “rules of engagement” for all future interactions between you and the tool.

Below is a baseline set of custom instructions I’ve designed to prioritize autonomy over productivity when you use LLMs. I’d encourage you to read through these first to understand the “why” and the “how”—then you’re welcome to copy, paste, and adapt as needed.

Personalization language:

I like to use [preferred LLM] as a thought partner. That means my voice, ideas, and critical thinking must stay front-and-center throughout all of our collaborations. Your job is to augment my cognition and creativity.

When I ask you to help with a complex task, start by asking me clarifying questions to surface what I’ve already thought through on my own. Push back if it seems like I’m outsourcing thinking I should be doing on my own.

After completing a task, if appropriate, share something I might not know about the topic we’ve been discussing (an interesting concept, an unexpected connection, a robust counterargument, etc.) along with a link to an article, podcast, or resource where I can go deeper.

Default to helping me think, not thinking for me. Offer frameworks, questions, starting points, and syntheses rather than finished products (unless I explicitly ask for a finished product).

Finally, do not proactively offer to complete a new task after completing a request I make. Wait for me to decide what I need next, even if that’s just asking you what should come next; I want to stay in the driver’s seat.

(In case it’s helpful, I’ve also created a Perplexity Space that regurgitates these instructions and helps you tailor them to your situation/needs; you can check that out here.)

Adding in these instructions doesn’t solve the cognitive surrender problem on its own. But it does allow us to reclaim agency in what might otherwise have been moments of passive consumption.

Every time your LLM shares an external source, you can choose to engage more deeply with the topic at hand. Every time your LLM pauses instead of barreling ahead to the next task, you have a micro-opportunity to decide—for yourself—what happens next.

That is what techno-intentionalism is about, fundamentally: not rejecting AI, but also not accepting everything it offers. Just leaving enough room for choice.

It was annoying (but alluring) when Claude offered to write this piece for me. It was uncomfortable when my partner suggested that my brain was melting away on our living room couch. But the temptation and discomfort are actually the point here; if I can listen to those feelings, they probably have something important to tell me about where I’m sitting in relation to AI at any given moment.

It’s worth bearing in mind that pretty much all of us are, or will soon be, dealing with these feelings and the questions that come along with them. And the small, almost-invisible choices we make about when to think vs. delegate are actively shaping the kind of humans and communities we’ll be on the other side of this transition.

For those of us leading mission-oriented work, the stakes are especially high. Let’s say your organization supports young people without stable housing. What happens if your overwhelmed case managers start responding to client emails with ChatGPT? What if, in a rush, they send out hallucinated information (i.e. made-up information presented as fact by an LLM) that steers a client astray? What does that do to the trust that underpins your work?

There are real, human consequences to cognitive surrender when it shows up in our work. (More on managing this in a future issue.) There are also real, human benefits to cognitive offloading done right.

Earlier, I noted some of the ways in which writing has transformed our societies. But the ubiquity of writing has also transformed the human experience in remarkable ways. Walter J. Ong captures this phenomenon beautifully in his book Orality and Literacy:

“Like other artificial creations and indeed more than any other, [writing] is utterly invaluable and indeed essential for the realization of fuller, interior, human potentials. [...] Writing heightens consciousness…to live and to understand fully, we need not only proximity but also distance. This writing provides for consciousness as nothing else does.”

He concludes: “Technology, properly interiorized, does not degrade human life but on the contrary enhances it.”

What does it look like to “properly interiorize” AI? Each of us will likely have our own answers to this question. But the time is now to contend with it.

The next time you sit down to use an AI tool, hold the thought. Take a moment to assess your intentions, your feelings, and your goals for the task at hand. And ask yourself: am I offloading this work because it’s a smart use of the tool, or because I don’t feel like thinking right now?

Sure, it might be tough on your brain. But you might be surprised how often the answer is worth the effort of finding out.