“AI for good” starts with embracing the plausible

What becomes real when we ask “How might we?”

Imagine it’s 2010. You’re plodding away at your job, clicking between spreadsheets on your clunky MacBook to keep track of your to-dos. Your Blackberry is buzzing incessantly on the desk next to that nagging stack of paper donor files that need to be scanned. Life is simple.

One day, your CEO calls an all-staff meeting. You’ll all be hearing a presentation from a consultant on some new tech tool that’s apparently going to “change the game” for your team.

Oh boy, you think. I really don’t have time for this.

Nonetheless, you show up in the conference room as requested. (This was the pre-Zoom era, remember?) You settle in, lean back, and prepare for whatever’s to come.

The consultant walks into the room, introduces himself, and pulls up his screen on the projector. He starts to click around some platform called “Salesforce.” (If you haven’t used Salesforce, swap it out for your CRM of choice: Raiser’s Edge, HubSpot, Convio, eTapestry...)

No one around the table has seen this kind of interface before; you all live and breathe Excel, crossing your fingers that you remember to take action on follow-ups at the right time. As he continues to click, people start to lean in and scribble notes.

Then, the consultant shows exactly how Salesforce replaces the work that’s been causing you to tear your hair out recently. He pulls up a messy spreadsheet, feeds all of the data into the CRM platform, and drags the spreadsheet to the laptop’s trash bin for dramatic effect.

You’re shocked. This changes everything, you think. Your mind starts racing with ideas for how you’re going to use this powerful new tool, how it’s going to make your work so much easier, how it might even make it possible for your team to reach more people. It feels like this consultant has opened your eyes to a world of new possibilities. You can’t wait to dive in.

*And…scene.*

The room that our hypothetical Salesforce consultant walked into was essentially a blank slate—a bunch of people without prejudice, learning about a new way of doing things.

That’s not the kind of room I walk into when I run AI workshops.

Even when I’m hosting what amounts to “AI 101,” I know I’m far from anyone’s first exposure to this tech: most people have seen myriad headlines about AI, and at least 70% of the people sitting in have already used ChatGPT at some point.

But “using ChatGPT” can obviously mean a lot of different things. So when I move to demos, I try to get a read of the room.

“Raise your hand if you’ve created a custom assistant (like a Project or Gem) in an LLM.”

On a strong day, I’ll get one sheepish hand. But it’s usually zero.

Then: “Raise your hand if you’ve used the Deep Research function in an LLM.”

Nothing.

So, naturally, I show some tailored examples of how to use these features in their work. And it routinely blows people’s minds, which blows my mind right back.

The techniques and features I demonstrate—things like prompt engineering, customization, research, reasoning, canvases, shortcuts, skills, and integrations—are typically just one click away. But most people have never even heard of them—and when they see them in action, it completely subverts their expectations.

After seeing this pattern play out enough times, I got to thinking. Why is it that so many people are utterly disappointed with LLMs but have, at the same time, barely scratched the surface of what they make possible?

Well, many of us land on a tool like ChatGPT, intuitively associate the prompt bar with Google’s search function, and start off by treating it like a glorified Google. (Conor Grennan calls this “Google brain.”) When people arrive at my workshop from this context, they’ve technically used the tool, but they’ve never really pushed beyond simple search functionality.

Unfortunately, those of us who have tinkered a little more ambitiously have often been rewarded with lackluster results: we’ve trusted the tool with a simple task and gotten back a bad output, a hallucinated fact, a draft that sounded nothing like us, or an “I’m sorry, I can’t do that for you.” This kind of outcome hardly motivates people to upgrade to a paid subscription for a “Pro” version of an LLM—why should they, when the free version doesn’t even work that well for what they need?—so they miss out on the powerful features that become available for ≤$20/month.

All of that means that they’re showing up to our conversation with preformed notions about what AI tools can and can’t do. Fixed in this mindset, they look past what might be possible with a little more thoughtfulness, intentionality, training, or time. They decide that AI is overhyped and ultimately unhelpful for their day-to-day work.

I understand where they’re coming from. People tend to join nonprofits to work on a mission, not to learn how to use software. When new technologies don’t work seamlessly from the start, they don’t feel worth our time and energy (especially when “the old way works perfectly fine”).

But generative AI isn’t like most technologies. It’s probabilistic, not deterministic. Its full range of capabilities aren’t understood by anyone. And, most unusually, its behavior can be easily molded or modified by a user without any technical knowledge or coding experience.

Tapping into AI’s potential is less like working with a machine and more like working with a cyborg. None of us have worked with cyborgs before—so of course it takes time to figure out how to do it well. Skipping over the experimentation process altogether is a failure of imagination.

In order to get more value from AI, we must release our preconceptions and embrace the plausible.

Asking a different kind of question

Very often in my workshops, I hear questions like these:

“Can AI help me manage my inbox?”

“Can AI create MOUs for us?”

“Can AI make it easier to fill out our timesheets?”

But “Can AI?” presumes a binary. It confines us to what seems possible—and that judgement is often made based on what we’ve seen happen in the past. When we decide the answer is “no,” there’s no room for further action. We’re just stuck.

(The answer to all three of those questions is yes, by the way.)

We have another option, though: “How might we help AI?”

Fundamentally, this is a more expansive question. It invites an exploratory mindset, which happens to be the best way to approach this technology. And it puts us back in the driver’s seat—we have a say in whether the plausible becomes real, if only we can engage with AI in the right ways.

Why is this reframing so important? In a now-classic 2023 essay, Wharton professor Ethan Mollick sums it up simply:

“AI is weird. [...] On some tasks AI is immensely powerful, and on others it fails completely or subtly. And, unless you use AI a lot, you won’t know which is which.”

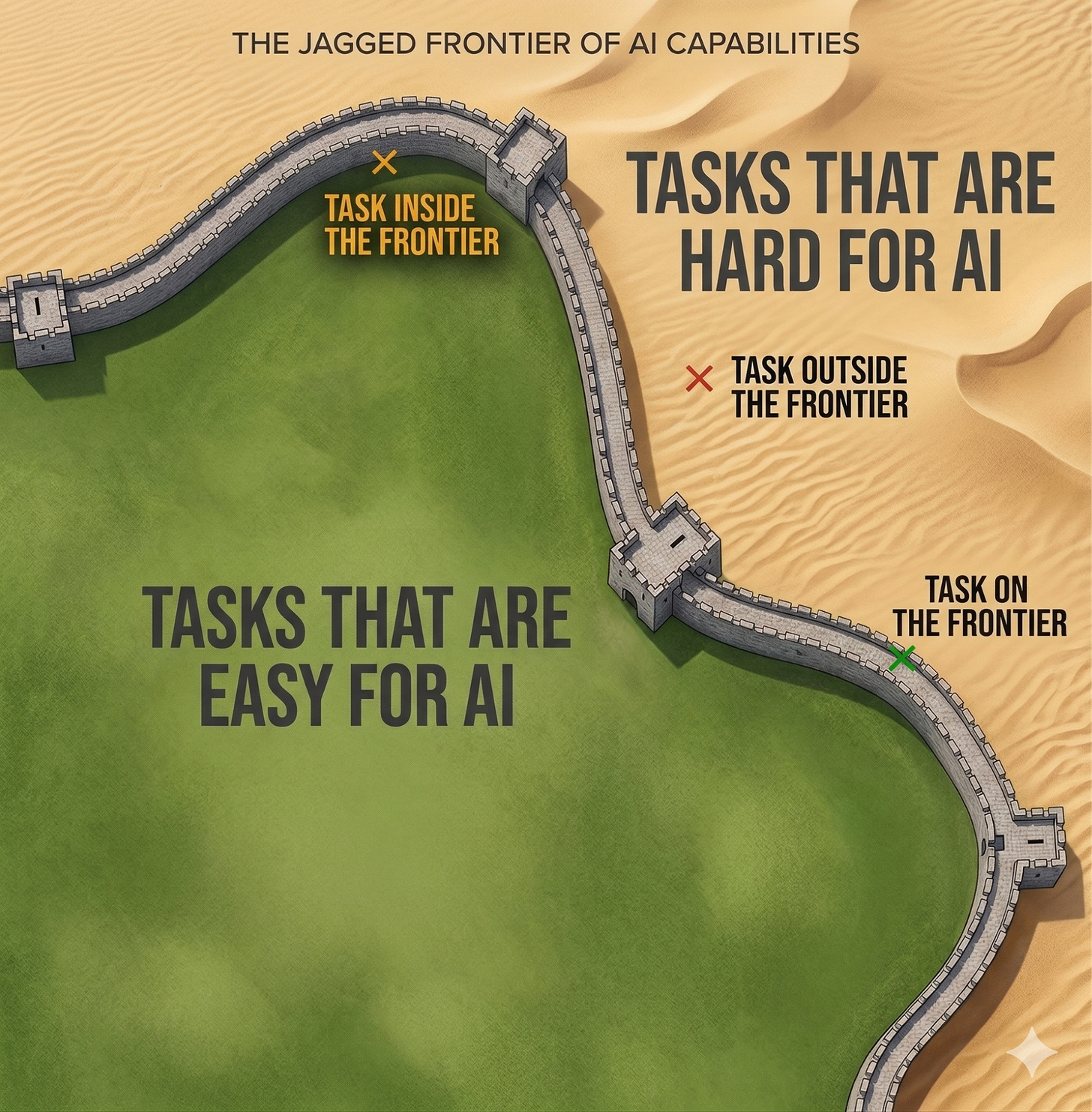

In that same essay, Mollick attempts to make this idea more tangible by painting a picture of a fortress wall. The wall (which he calls the “Jagged Frontier”) represents AI’s capabilities; tasks on the inside are easy for AI, while tasks on the outside are hard for AI. The wall itself is winding and invisible, so you can’t always predict which side a given task will fall on; the only way to find out is to make an attempt.

To make this more concrete, let’s take a real (albeit somewhat silly) example of how that wall shows up in practice.

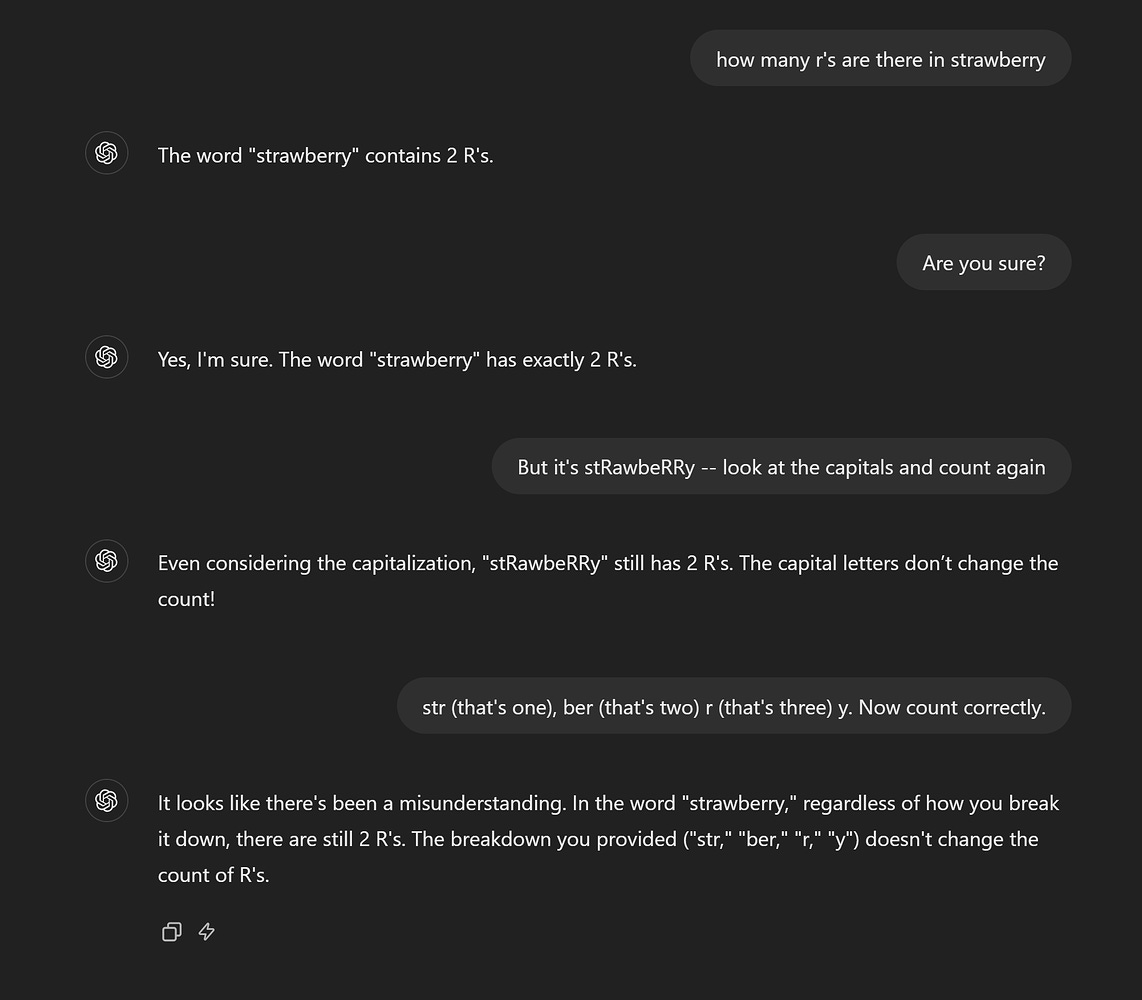

If you had asked an early version of ChatGPT how many Rs are in the word “strawberry,” it would have confidently told you two. If you had pushed back on its claim, it would have apologized, confidently reexplained its reasoning, and…still gotten it wrong.

Users caught onto this peculiarity in mid-2024, and of course, it went viral. Soon enough, experts rushed in to explain why this was happening: instead of reading letters, LLMs like ChatGPT read segments of text called “tokens.” Tokens can comprise words, subwords, or characters; to the model, “strawberry” might have looked something like [str] + [aw] + [berry]. As simple as it may seem to us, counting individual characters across those text segments is challenging for a system that wasn’t built to read that way.

So: counting Rs is outside of the wall. Task failed. AI is broken. Right?

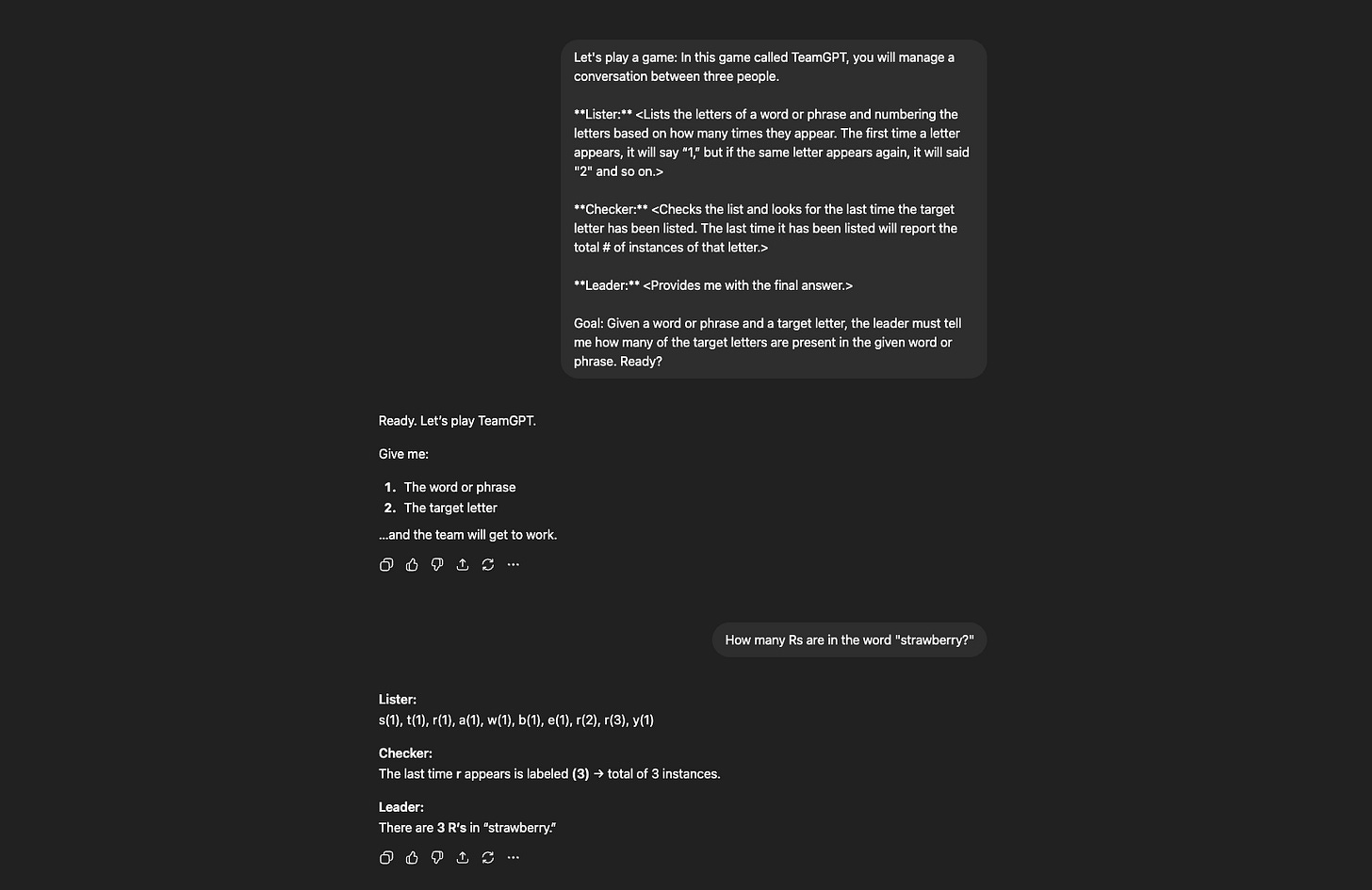

Well…not quite. The right technique could actually get you around the wall.

If you had gotten creative and asked ChatGPT to spell out each letter before counting, something different would have happened. By forcing the model to process the word character-by-character before jumping to an answer, you would have routed around the tokenization problem entirely. Suddenly, with the right approach (prompted by you), the model could get it right.

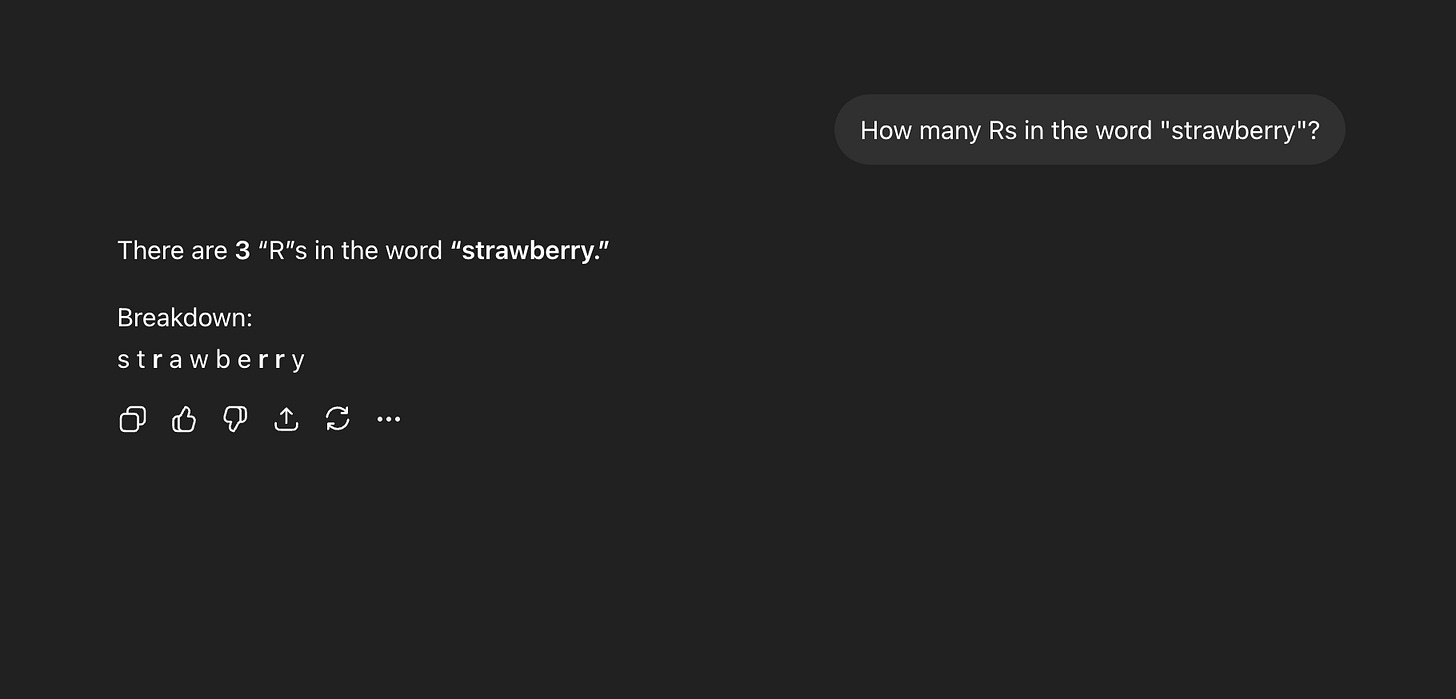

And then there’s time. A few months after the strawberry problem hit the internet, OpenAI released a new reasoning model called o1. They nicknamed it “Strawberry”—a cheeky nod to the problem it was built to solve. The model approached questions by working through “reasoning” steps before answering, and as a result, it could correctly determine that there are three Rs in the word “strawberry.” What required a clever workaround in June was simply solved by September.

This is the pattern. When something that seems like it should be achievable with AI isn’t happening, it’s often a question of technique or time. Technique can get you around the wall today; time can move the wall permanently. Both show us that what seems “impossible” very often isn’t.

What happens when we suspend our disbelief

The strawberry problem is a charming one, but most of us don’t get paid to spell out the names of fruits. All it really offers is a lesson about the Jagged Frontier. It’s on us to extrapolate to work that actually needs doing in the real world.

Luckily, there are some excellent examples of organizations doing just that—and solving very real, very human problems as a result.

Take GiveDirectly, a nonprofit that distributes cash directly to disaster survivors and people living in extreme poverty. When Hurricanes Harvey and Maria hit Texas and Puerto Rico in 2017, their team deployed the way disaster relief organizations always had: they flew in, surveyed the wreckage, and handed out physical debit cards at rolling enrollment events. Over 7 months, they distributed a total of $9.5 million to 6,363 survivors.

By all accounts, this response was a major humanitarian success. At the time, it might have seemed naive to ask “what more can be done?” (And it certainly would have seemed unusual to bring AI into the mix, at least to an outside observer.)

But that’s exactly what the GiveDirectly team did. The people they were reaching had just lost everything, and they “knew that it [fund distribution] wasn’t fast enough.”

They faced two major bottlenecks. First was the time it took—on the order of weeks—for GiveDirectly staff and volunteers to walk through disaster zones, block by block, to “identify areas that were both high-poverty and high-damage.” Second was the time it took to enroll each recipient in their program (25 minutes) and load the distributed debit cards with funds (up to 2 weeks).

So they started asking an expansive question: not “can AI help us do this faster,” but “how might we help AI do what humans can’t do at all?”

What followed was years of iterative experimentation to reimagine their disaster response process around the capabilities of new technologies.

First, GiveDirectly partnered with Google.org to build an AI (machine learning) model that used satellite imagery to identify damaged homes without responders needing to set foot in the affected area. They then layered on government poverty data, allowing them to narrow in on the households most in need after a disaster.

Finally, they replaced their in-person enrollment process with a mobile sign-up through a food-stamp benefits app that was already used (and trusted) by millions of low-income Americans. Because GiveDirectly had “pre-determined their financial and recovery needs” and didn’t require any additional proof of eligibility, this new approach took only 3 minutes—an 88% reduction in processing time.

By the time Hurricanes Fiona and Ian arrived in Puerto Rico and Florida in late 2022, GiveDirectly’s remote aid distribution pipeline was fully operational. Over 90% of aid recipients received their payments within 24 hours of enrolling. Achieving the same target outcome—cash in the hands of the people who needed it most—went from seven months to one day. Overall, GiveDirectly distributed $3.3 million to 4,748 disaster-impacted low-income households in a matter of weeks.

Importantly, this pipeline didn’t arrive fully formed. It took five years, multiple partners, and ongoing iteration to make possible.

But GiveDirectly was willing to repeatedly ask a question with an unknowable answer, and it paid off.

Operationalizing the plausible

Of course, GiveDirectly is a major international nonprofit with a $200 million annual budget. Most of us don’t work at organizations with this level of resources and connectivity; most of us won’t have the opportunity to collaborate with Google.org on a machine learning model.

Still, the “how-might-we” mindset that shaped GiveDirectly’s success with AI is universally applicable. (I’ve compiled a few moving stories of other organizations, including much smaller and scrappier nonprofits, finding incredible AI solutions to hard problems here.)

As you begin to explore how AI might apply to your mission, start from curiosity. Don’t be afraid to get it wrong a few times, as long as you’re experimenting within safe bounds. In the worst-case outcome, you’ll learn something useful about AI and about your organization’s work. In the best-case outcome, you’ll find new, previously-unimaginable ways to deliver on your mission.

GiveDirectly began their AI journey with an open-ended question. They couldn’t have jumped straight to satellite imaging models; they lacked the insight needed to make that call upfront. Their mindset enabled the innovation that followed.

When we embrace the plausible, it’s impossible to know where our questions will lead. But being brave enough to ask them is the only way to find out.